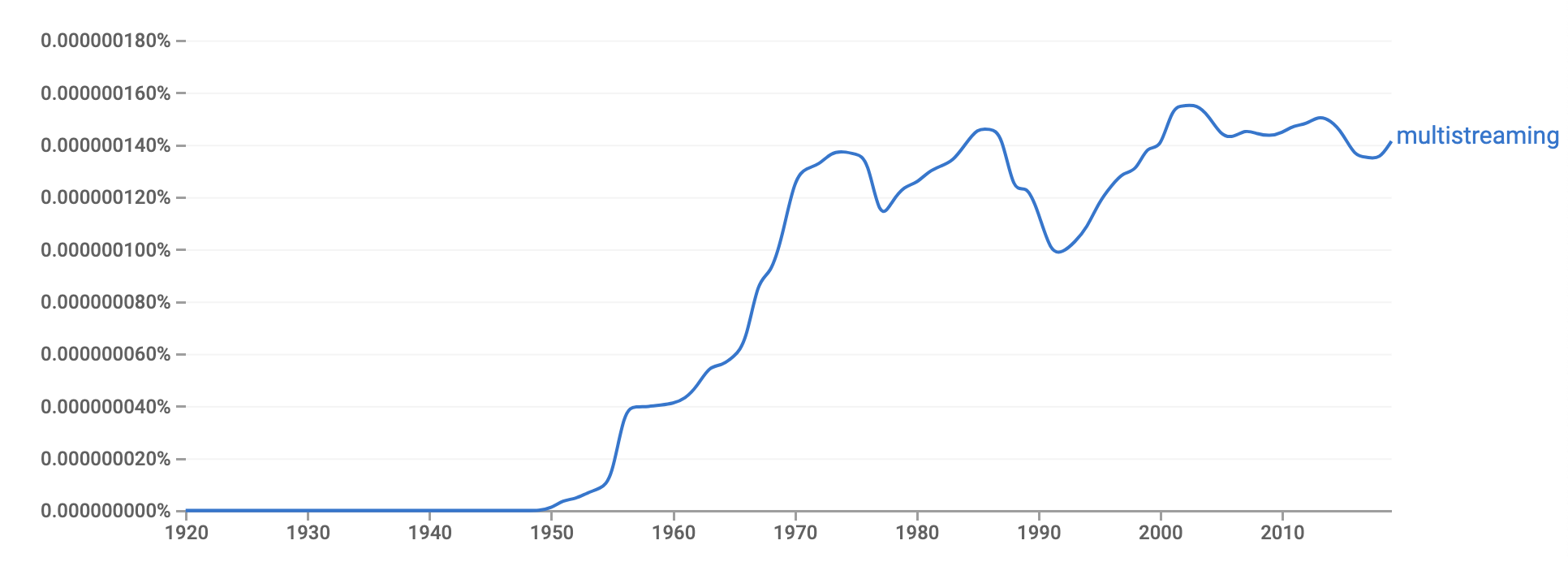

I actually had to write a function to get direct.labels() to display annotations after the smoothed curve instead of after the line of the raw data. If you have more computer power than I do you could work with 2-9-grams and generate much cooler data. What this tool does is just connecting you to 'Google Ngram Viewer', which is a tool to see how the use of the given word has increased or decreased in the past. For example I could combine “socialism” and “capitalism” with data about which US political party were in power at that time. One could, for instance, aggregate the data with another data set. However, you could do much more with this data than with Google Ngram Viewer. Well, yes, this is exactly like using Google Ngram Viewer except with sexier graphics. We extend related work by using the Google Books Ngram Viewer (Google Ngram) to retrieve and adjust word frequencies from a large corpus of books (8 million books or 6 percent of all books ever published) and to subsequently investigate word changes in terms of anxiety disorders, depression, and digitalization. Gay vs Lesbian vs Homosexual vs Heterosexualīut isn’t this exactly like using Google Ngram Viewer except a lot sexier? Psychodynamic vs Psychoanalysis vs Psychoanalytic vs Psychotherapy Here I added a smoothing function and ran some more queries. Since I figured it would take a couple of hours to build the database I first combined all 10 files into one csv-file using cat in Terminal: Import Google 1-gram into a MySQL database After you’ve downloaded the files unzip them. Go to and get the data files for Google 1-gram files 0-9. Here’s the documentation on how to do it on Mac OS. I’m on Mac OS and it was really straightforward to get MySQL up and running. Setting up MySQL Get MySQLįirst you need to install and setup MySQL on your system. Ngram Viewer, for example, lets you search the breadth of Google Books and find out when a word or phrase first showed up in a book, how often it appeared, etc. Google Books may have been controversial in the book publishing industry, but it did have its fringe benefits. If you’re not interested in the technical aspects of this post, you could just jump to the end of it to view an example of different applications of the n-gram database. Google SearchLiaison (searchliaison) July 13, 2020. Using R one can combine match counts regardless of case lettering and display the results in a more intuitive way using ggplot2. Since it’s case sensitive queries like “psychotherapy” and “Psychotherapy” will give different results. Of course, one could just use Google Ngram Viewer but what’s the fun in that? And it won’t really give the output that I’m looking for.

I’ve also written an R script to automatically extract and plot multiple word counts. Here I’m going to show how to analyze individual word counts from Google 1-grams in R using MySQL. Google Ngram is a corpus of n-grams compiled from data from Google Books.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed